Machine learning can be thought of as dealing with data such that we get answers for the questions we have. It is the development of a function that will use your data to give you approximations for your questions.

My argument is: given the three time frames (past, present, future), we basically look for:

- What the thing is – identity / classification

- What’s inside the thing – composition / decomposition

- What’s not in the thing – negation / contrast / boundary definition

And these three apply within each time frame:

| Time frame | What it is | What’s inside it | What’s not in it |

|---|---|---|---|

| Present | Current label | Current components | Current exclusions |

| Past | Previous identity | Previous composition | Previous exclusions |

| Future | Predicted identity | Predicted composition | Predicted exclusions |

But all of these possibilities map into the same object. For example you have a picture of a ball. With this we can answer:

- What is the ball like currently?

- What is the ball made of?

- What would the ball be like in the future?

- What was the ball like in the past?

- What is the ball not made of in the past, present, or future?

The meta layer to this is when we try to answer questions like “What were the other balls like?”

What was the ball adjacent to it like right now or in the past or future?

For example, in text in the present time frame :

You have a block of text. You can identify what the block is, what the sentiment is, and what the content is. You can also say what’s in it, including what words are in it and what words are not.

LLMs are amazing because they can now tell you what was before or after this block. They are essentially approximating an entirely new object.

We are talking about being able to look at data, learn patterns from it, and create a new object that was never part of the training.

This is no longer about the same object across time. It’s about relations between distinct objects in a shared context (space, sequence, graph, or discourse).

Since the object’s data itself cannot tell us much about other objects and we won’t have data about all the objects, we might have to figure out just how our current object relates with other objects with the bits and pieces we have about them and try to reconstruct the other objects using that.

You have pieces of text as training data in LLMs and it generates new pieces of text, objects that were never in its training

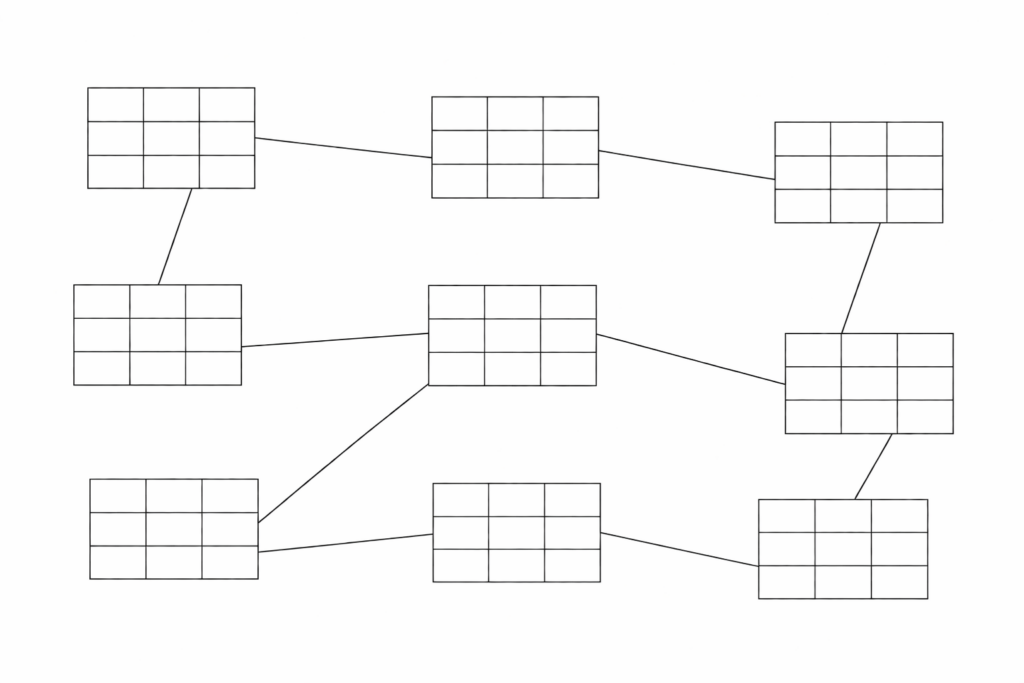

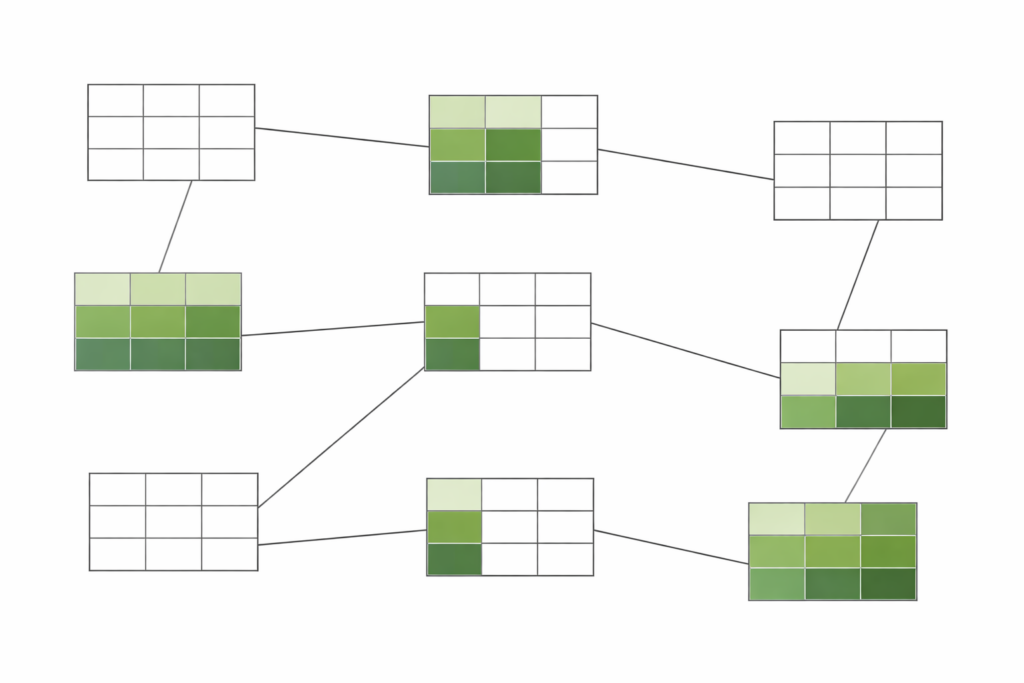

The whole scope can be imagined as one object, and it’s nine questions from the three time frames we had. Now imagine many such objects and try to answer their nine questions using this object we have as training data.

And of course we are not going to have all the data for all the objects but we try to get as much as we can.

And with whatever data we have we try to establish the relation between the objects so that we can recreate the objects and solve the nine questions.

We saw that the scope goes from 0 to 100 real quick. It went from just telling you what’s inside the object to predicting other objects and everything about them.

Definitely, for a different scope of predictions you want to do, you need a different scope of data.

Platonic ideal of data would be : All nine questions and answers and that too for all objects, and then we are able to train over it. Such data would allow us to generate objects and try to answer its nine questions.

This is what we see in today’s AI arms race: people want to get their hands on any type of data they can find so they can train their models on it. The more data it has, the more competent it becomes at solving these meta questions.

But still let’s just make a quick table that roughly tells us what type of data we need for what type of questions we got.

| Question Type | Time Frame | Data Needed | Example |

|---|---|---|---|

| What is it? (Classification) | Present | Labeled examples of the thing | Images with class labels |

| What is it? (Classification) | Past | Historical records with labels | Old medical records with diagnoses |

| What is it? (Classification) | Future | Time-series of labeled states | Stock categories across quarters |

| What’s inside it? (Decomposition) | Present | Structured/annotated internals | Part-segmented images, parse trees |

| What’s inside it? (Decomposition) | Past | Archived compositional data | Historical ingredient lists, old schematics |

| What’s inside it? (Decomposition) | Future | Sequential compositional change data | Material degradation logs over time |

| What’s not in it? (Negation) | Present | Negative examples, contrastive pairs | What a tumor scan is not, OOD samples |

| What’s not in it? (Negation) | Past | Records of absence or exclusion | What ingredients a recipe never used |

| What’s not in it? (Negation) | Future | Anomaly baselines, failure mode logs | What a healthy engine reading won’t show |

| Relations to other objects | Any | Co-occurring, linked, or contextual data | Text corpora, knowledge graphs, scene graphs |

| Reconstructing unknown objects | Any | Partial observations across many objects | Web-scale text, satellite imagery mosaics |